Graph Foundation Models for Recommendation: A Comprehensive Survey

Jan 1, 2025· ,,,,,,,,·

0 min read

,,,,,,,,·

0 min read

Bin Wu

Yihang Wang

Yuanhao Zeng

Jiawei Liu

Jiashu Zhao

Cheng Yang

Yawen Li

Long Xia

Dawei Yin

Chuan Shi

Abstract

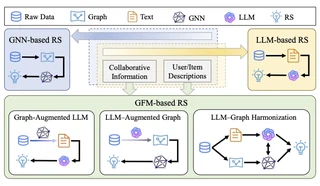

Recommender systems (RS) serve as a fundamental tool for navigating the vast expanse of online information, with deep learning advancements playing an increasingly important role in improving ranking accuracy. Among these, graph neural networks (GNNs) excel at extracting higher-order structural information, while large language models (LLMs) are designed to process and comprehend natural language, making both approaches highly effective and widely adopted. Recent research has focused on graph foundation models (GFMs), which integrate the strengths of GNNs and LLMs to model complex RS problems more efficiently by leveraging the graph-based structure of user-item relationships alongside textual understanding. In this survey, we provide a comprehensive overview of GFM-based RS technologies by introducing a clear taxonomy of current approaches, diving into methodological details, and highlighting key challenges and future directions. By synthesizing recent advancements, we aim to offer valuable insights into the evolving landscape of GFM-based recommender systems.

Type

Publication

arXiv preprint arXiv:2502.08346

Authors

Yihang Wang

(he/him)

MEng Artificial Intelligence

I am currently a master’s student at the Institute of Computing Technology, Chinese Academy of Sciences, with a research focus on representation learning and information retrieval. I am passionate about exploring fundamental challenges in Natural Language Processing and related areas. I possess strong self-learning capabilities, solid research and engineering practice experience, effective communication skills, and a positive, collaborative attitude. I have also accumulated rich research experience through participation in multiple academic projects.